The Standard Model of particle physics has survived everything thrown at it for over 50 years. A 27-kilometre ring of superconducting magnets under the French-Swiss border may finally be proving it wrong.

New results from CERN’s Large Hadron Collider show that a specific type of subatomic particle decay — charmingly known as a “penguin” — doesn’t behave the way the Standard Model says it should. The anomaly, which has been building since 2015, now sits at 4 sigma significance. That means there is roughly a one in 16,000 chance that random noise in the data could produce this signal if the Standard Model is correct.

The finding has been accepted for publication in Physical Review Letters. Particle physicists are paying close attention.

What the Standard Model Is — and Why It’s Frustrating

The Standard Model is physics’ best description of fundamental particles and the forces that govern them. Built on quantum mechanics and Einstein’s special relativity, it predicts with extraordinary precision how matter behaves at the smallest scales. It has withstood more than 50 years of increasingly rigorous testing.

But physicists know it’s incomplete. It doesn’t explain gravity or dark matter — the invisible substance that makes up roughly 25 percent of the universe. It offers no account of why particles have such wildly different masses. For decades, researchers have been searching for cracks: any measurement that deviates from the model’s predictions. Most apparent anomalies, like a disputed measurement of the W boson mass, have evaporated under further scrutiny.

This one hasn’t. At least not yet.

What the Experiment Actually Found

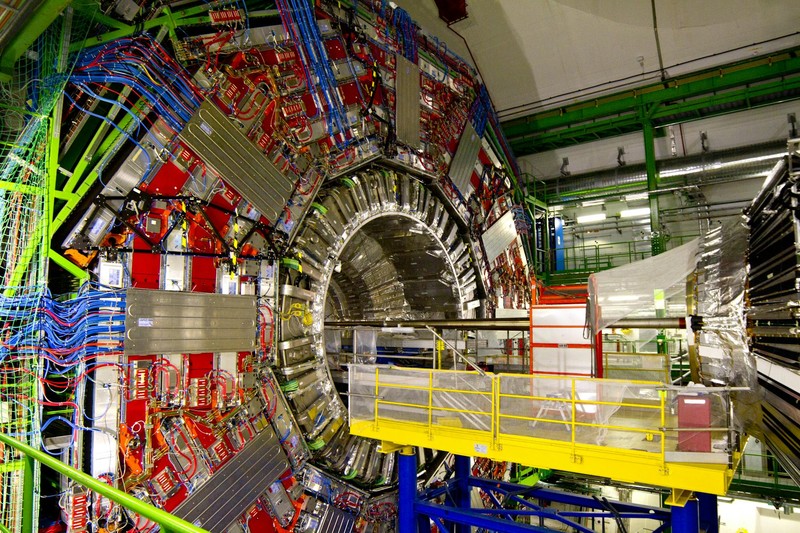

The result comes from LHCb, one of the four main experiments at the Large Hadron Collider. Rather than hunting for new heavy particles directly, LHCb looks for their indirect influence — the subtle ways that undiscovered particles might distort how known particles decay.

Researchers analysed roughly 650 billion decays of particles called B mesons, collected between 2011 and 2018. A B meson contains a bottom quark bound to a lighter quark. In rare cases, it decays into a kaon, a pion, and two muons.

The key measurement was the angles at which those final particles emerge. The Standard Model makes precise predictions about those angles. The data disagrees.

“This is among the most significant results of the last few years at the LHC,” said William Barter, a particle physicist at the University of Edinburgh who works on the LHCb experiment.

A separate LHC experiment, CMS, has observed a similar discrepancy in the same decay with lower statistical significance, according to findings published earlier in 2025. The two results agree with each other — which makes physicists more confident the signal is real.

Why It’s Called a Penguin

In 1977, British theorist John Ellis lost a bet and was forced to include the word “penguin” in his next paper. The decay diagram he was describing happened to look vaguely like one. The name stuck for nearly half a century.

What makes penguin decays scientifically important is their rarity. Because so few B mesons decay this way, any influence from undiscovered particles should stand out more clearly than in common decays, where the signal would be drowned out.

The process works through what physicists call a quantum loop: a bottom quark briefly transforms into virtual particles that pop in and out of existence before becoming a strange quark. If a heavy, unknown particle briefly enters that loop, it could skew the decay angles in precisely the way the data shows.

This kind of indirect observation has precedents. Radioactivity was discovered roughly 80 years before the W bosons responsible for it were directly observed.

What Comes Next

Four sigma is compelling but falls short of the five-sigma threshold that particle physics treats as a confirmed discovery — roughly a one in 1.7 million chance of being a statistical fluke.

There is also a specific reason for caution. A competing process involving charm quarks — dubbed “charming penguins” — can produce the same final particles, and theorists struggle to calculate precisely how much it affects the results. Theory suggests charming penguins are unlikely to explain the full deviation, but their existence prevents anyone from claiming victory.

If the signal holds, two categories of new physics could explain it. One is a hypothetical particle called Z’ (Z prime), a heavier cousin of the Z boson that would mediate an entirely new force discriminating between different “flavours” of matter. Ben Allanach, a theoretical physicist at the University of Cambridge, has noted that Z’ could also help explain why particle masses vary so dramatically.

The other candidate is a leptoquark — a short-lived particle that would, at high energies, bridge two families of matter that the Standard Model keeps strictly separate: leptons (electrons, muons, neutrinos) and quarks.

Definitive answers will take time. LHCb has already recorded roughly three times as many B meson decays since the dataset used in this analysis. Further upgrades planned for the 2030s could expand the dataset fifteenfold, according to researchers at the experiment, potentially allowing definitive claims.

The Standard Model has survived everything thrown at it. It may finally have met its first real crack.

Sources

- The exotic particles that could finally break the Standard Model — Nature

- Our Large Hadron Collider results hint at undiscovered physics — The Conversation

- Searching for new physics with the flavour changing neutral current decay B0→K*μμ — LHCb Outreach (CERN)

Discussion (9)